I built a basic test room with a desk at the same height as my physical desk where I have been experimenting with different layouts of The Future of Text for VR. An introduction/overview is in this video, based on a presentation to the Future Text Lab on the 17th of October 2022.

Two Page Spread

The first environment is simply the PDF version of the book in VR, opened to a two page spread to experiment with reading in VR. Further refinement will be necessary but I have continued with the issue of finding oneself around the volume rather than reading a section, as follows.

https://hubs.mozilla.com/ompRmse

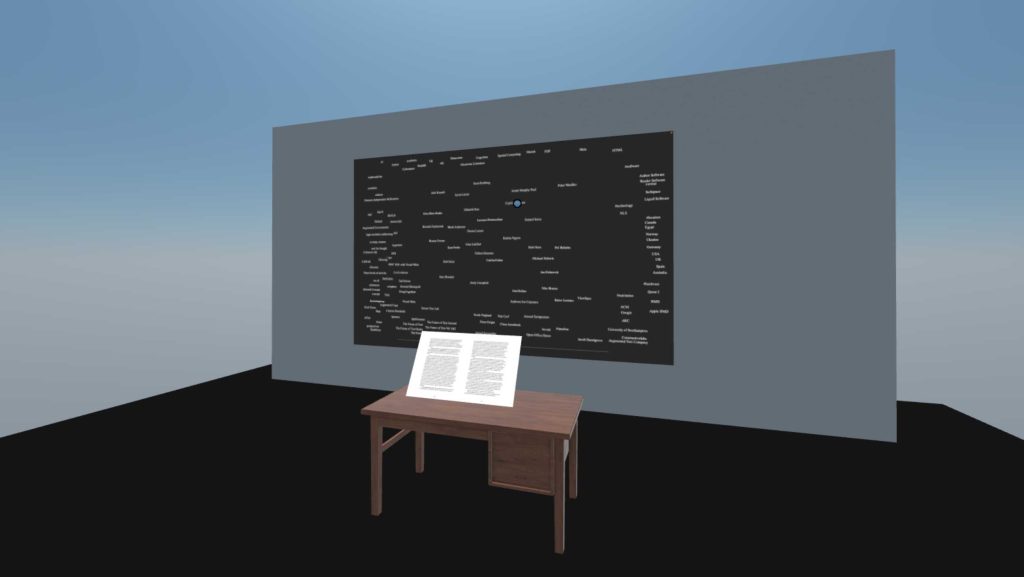

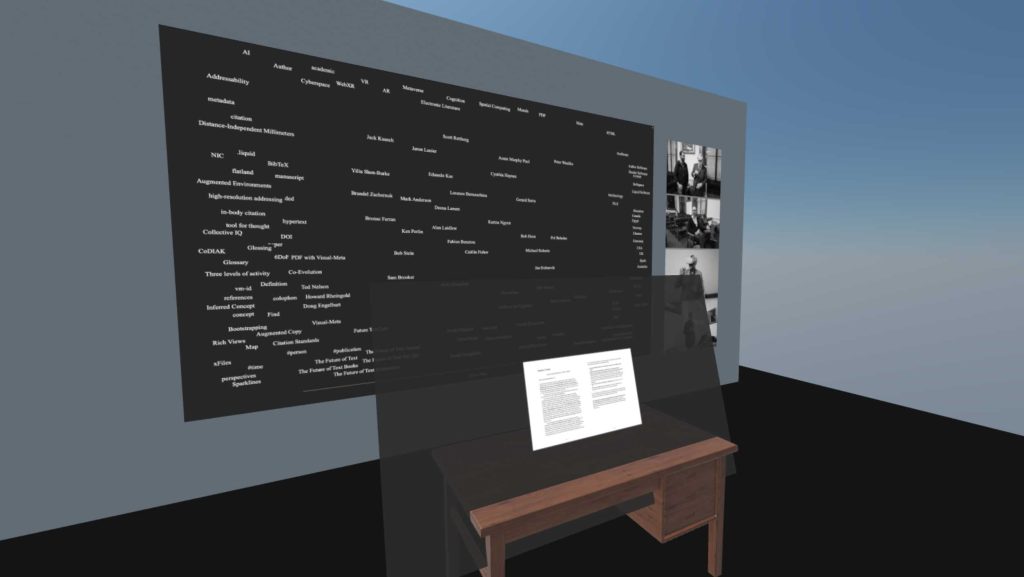

With Map

Here an ‘Author’ style ‘Map’ or Graph has been included. Interactions are discussed in the video above.

https://hubs.mozilla.com/ihTQoAi

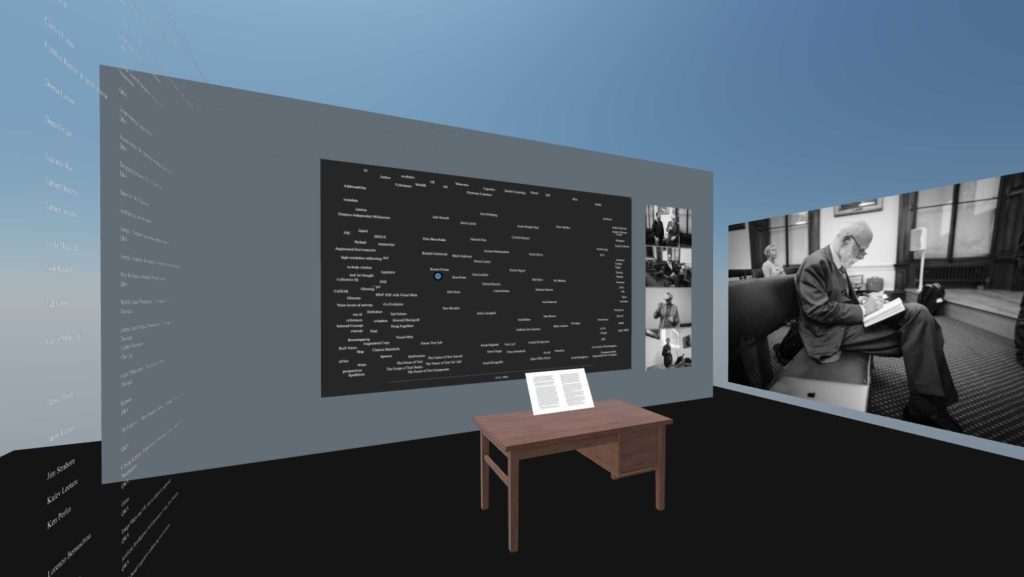

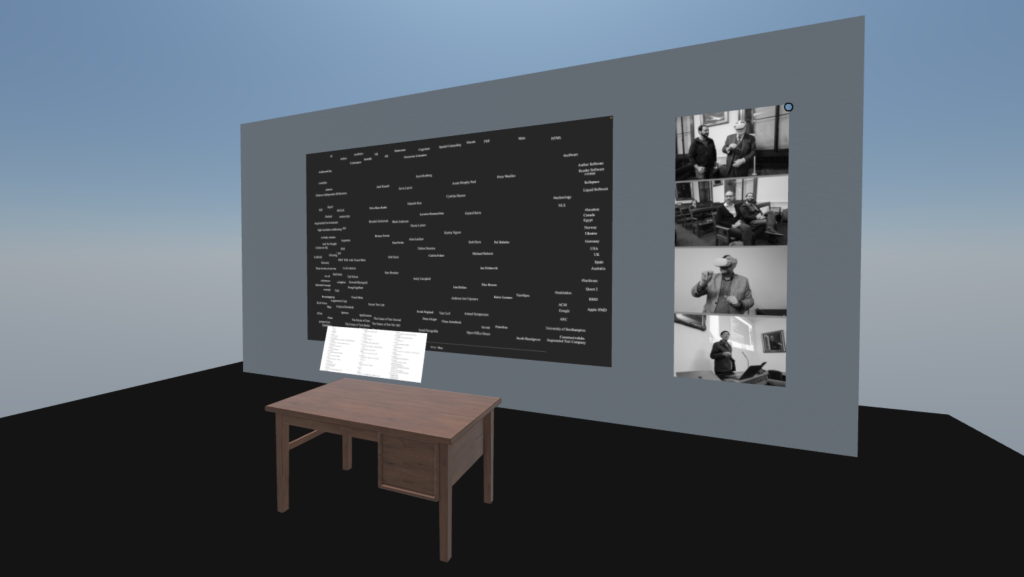

With Map & Pics

Here the environment has been filled in with some relevant pictures on the side, from the Future of Text Symposium, including one picture dragged out on the right to be large.

https://hubs.mozilla.com/hKD2WA9

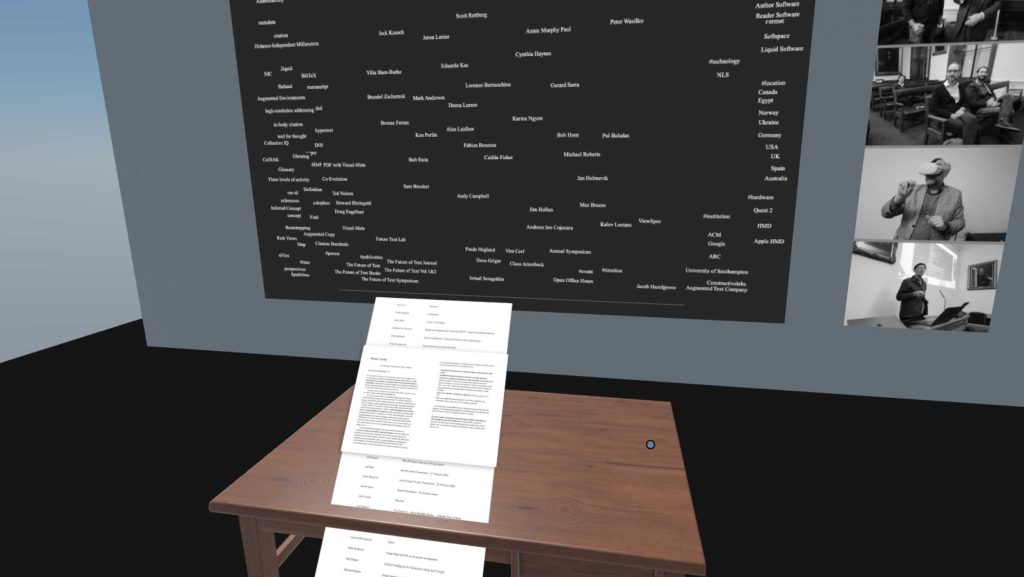

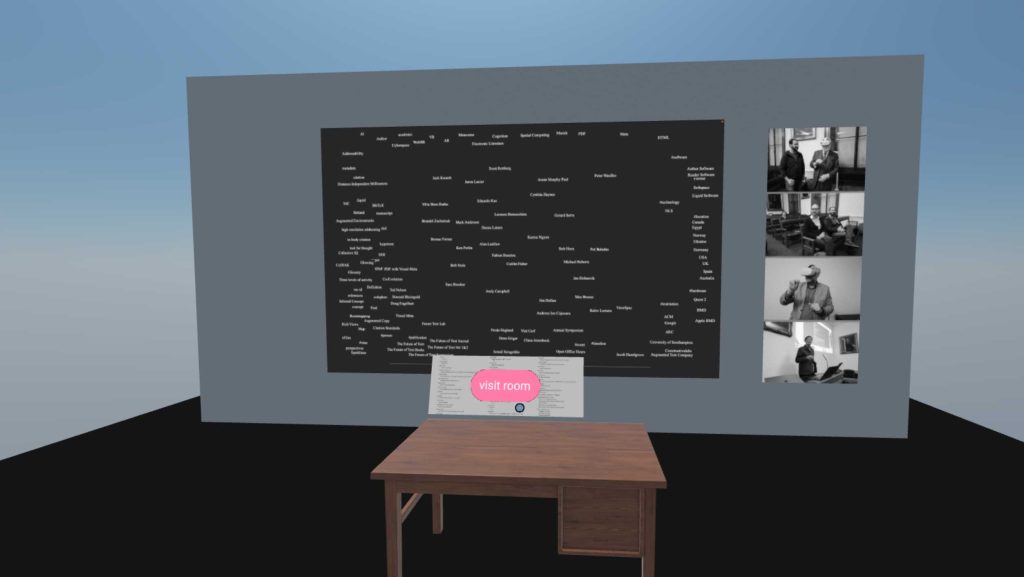

Menu with preview

In this example the idea is that it would actually be nice to to through a table of contents at more ‘paper-scale’ so it is imagined to have a long scroll of ‘paper where the user can tap on any article to see the first page and then fold it away again, or keep reading, folding away the table of contents. I have experimented with horizontal (see below) and vertical tables of contents for this and it does seem, to me anyway, that vertical is easiest to scan.

https://hubs.mozilla.com/r2ksN2i

Two page spread w ‘read-block’

Here I have experimented with providing a semi transparent, dark, screen around the document when reading, as a way to see if it can help focus. Of course, the whole room can be dimmed as well.

https://hubs.mozilla.com/SpVik7g

Horizontal Table of Contents

Here the table of contents is in a human-readable horizontal on the table. https://hubs.mozilla.com/2fSVUVk

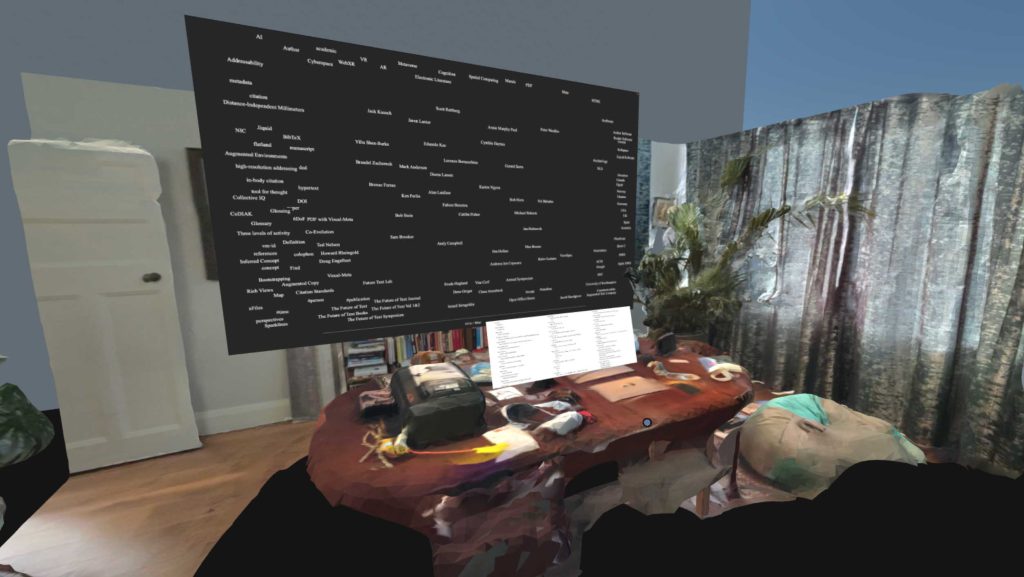

Rough Lidar scanned room with same information

I scanned my work room, when it was pretty messy(!) to see how working in my own environment would be like, expecting to be able to try this in AR with the Quest Pro, due to arrive soon.

https://hubs.mozilla.com/ZBbhZq3

Room with Open/closed document

Here you can tap on the document to toggle the room. It’s a link and not very elegant but neither is going back to this page either.

https://hubs.mozilla.com/Bo4Hzo2

All first pages of articles on wall (for Fabien)

In our call on the 17th of October Fabien suggested we experiment with cutting up a book and pasting all the pages on a wall to see what it would be like to get a sense of the book. In this case I only took the first page of level 1 headings, as I think that should provide a good intro, but this of course does not show any images from further in the articles. To be further experimented with.

https://hubs.mozilla.com/4ErTKvB

All first pages of articles at half height

Same as above, but here it’s only the top half of each page since that’s where the title is. Interaction could be touch to see full page.

https://hubs.mozilla.com/BqBqt4b

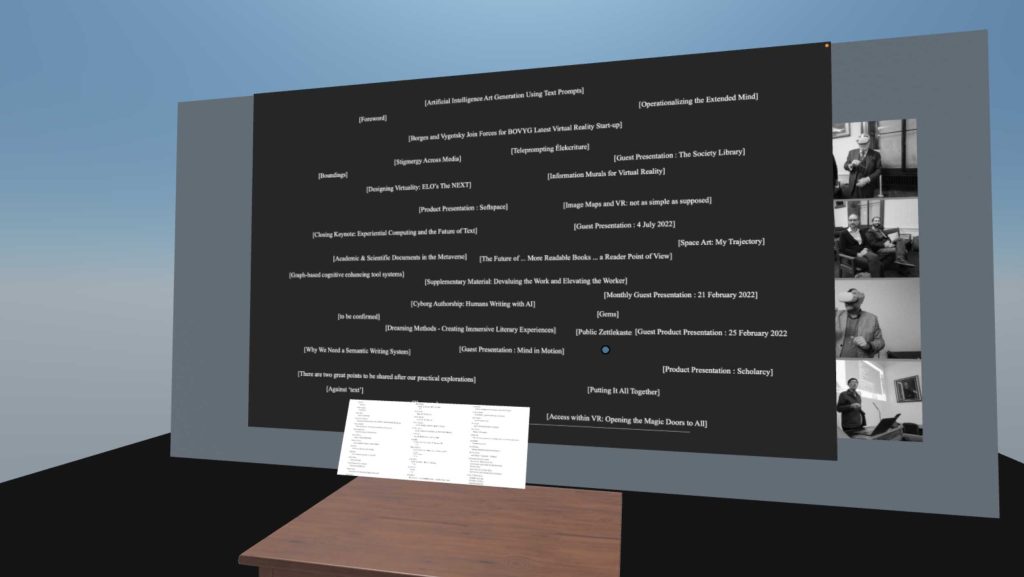

Map of [articles]

Here I have taken the names of all the articles and written with with [boxes] to follow the suggestion made in the video at the top of the page, and it is clear that as it shows here, it is a mess.

I cannot make the text small in the Author Map currently so that does not help but it would be interesting to have a very large Map in VR at some point.

https://hubs.mozilla.com/kmFetSU

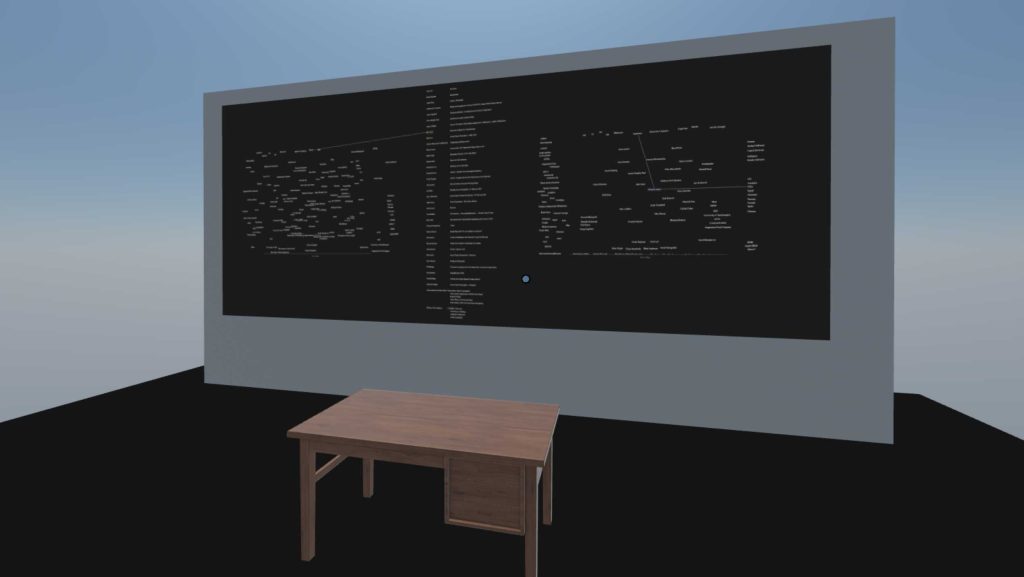

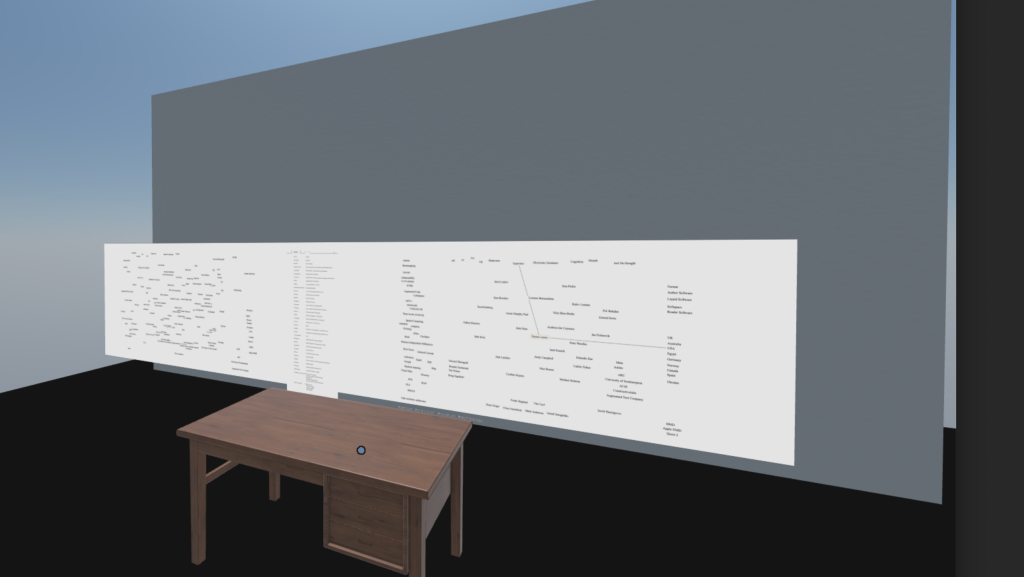

Huge Map (3x) with Central Column Light

I decided to play around with a huge Map, 3 times the size of a normal screen, with a central column in the middle.

The central column the user can only choose how to have arranged and what to show/hide, but not take it out of the column view, and a connected Map either side.

In this view there is no open document in the reading position, but the table is kept as a barrier to see how this would work, or not, from a seated position.

There ishttps://hubs.mozilla.com/ZBMTiAn

Huge Map (3x) with Central Column Dark

Same as above, but dark and the Map has been moved much closer to the user, almost intersecting the table, to test visual style and readability at different distances.

https://hubs.mozilla.com/LS5UZmQ

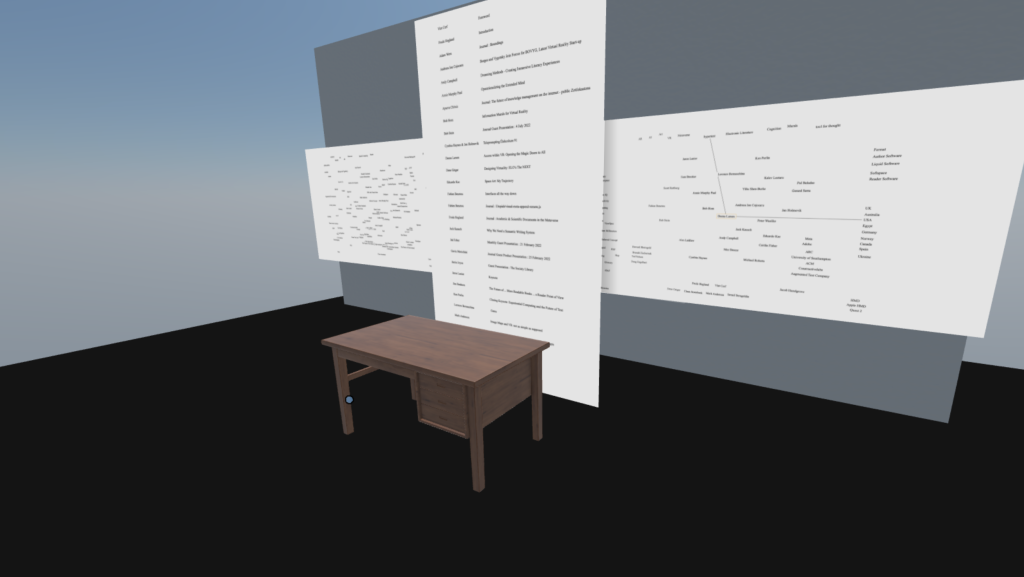

Central Column Highlighted

Here the central column is it’s own unit so that the user can scroll and scale it, while it stays connected with the Map shown either side.

https://hubs.mozilla.com/uB99jtZ

Conclusion : More human scale, more displays?

The upshot is that I think we should actually play around with different and connected displays. Not fully 3D but we need to start somewhere.

Open the book on the table as PDF or even as physical copy where the AR system performs OCR to identify the book. Now a large map appears and a column of table of contents, for the user to re-arrange and easily dip in and out of the book as he or she sees fit.

The combination of a free environment, a map and reading space seems fruitful to be at this point. What do you think? Here is the final VR experiment for this session, the same as above, but more intimate, more ‘human scale’. This may waste some VR potential but I think it first well with the task of making sense of the document. Interactions are to be discussed but they will allow for real change in the what is displayed.

https://hubs.mozilla.com/RuDomDy

Palms for reading

I did this as a test for how reading could transition to looking for what to see next, such as table of contents:

Also, from our explorations for the Symposium:

https://thefutureoftext.org/2022/09/19/vr/

2 comments